Raghav Kumar

Last updated: 23 February, 2025

I'm a full-stack Computer Engineer with 4 years of experience at Microsoft and, currently pursuing an MS at The Pennsylvania State University, University Park specializing in Computer Security and Engineering for AI. I have worked on most layers of computer engineering starting right from compilers and CUDA kernels for GPUs, to web applications and big data analysis pipelines.

Also, I love to read, especially about History and Economics, to learn about how we got here.

You can find my resume here, some of the interesting things that I'm working on below, and a few recommendations at the end.

Ongoing projects and notes

- I’m currently working on a semester long project to find out algortihmic or AI based methods to detect unexpected behaviour in systems built on Cardano. You can find our first draft proposal here.

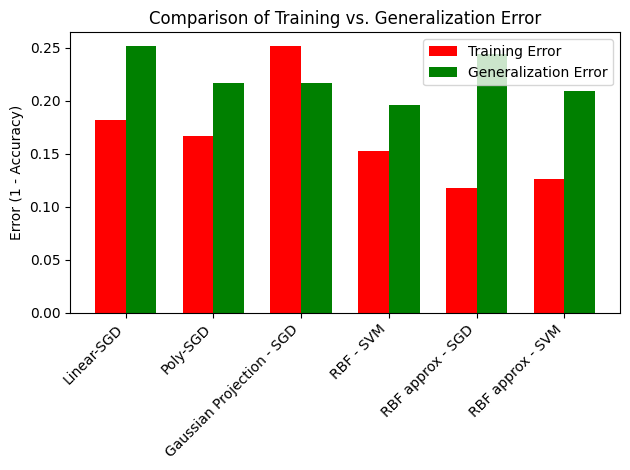

- Machine Learning is magic of mathematics. One set of tricks involves enlarging the input into many dimensions, which should make it more expressive and easier to learn. This can be done via the kernel trick (theoretically expanding input to infinite dimensions but only using output of a “kernel” function), or randomly sampling thousands of the infinite features, or multiplying the input with a random matrix obtaining a Gaussian projection. All these tricks should make the data easier to learn and the resulting model more accurate. Furthermore, linear classification can be done using either Stochastic Gradient Descent, or Support Vector Machines. So which pair of feature expansion and linear classifier works best? Check out the image below and head to this page.

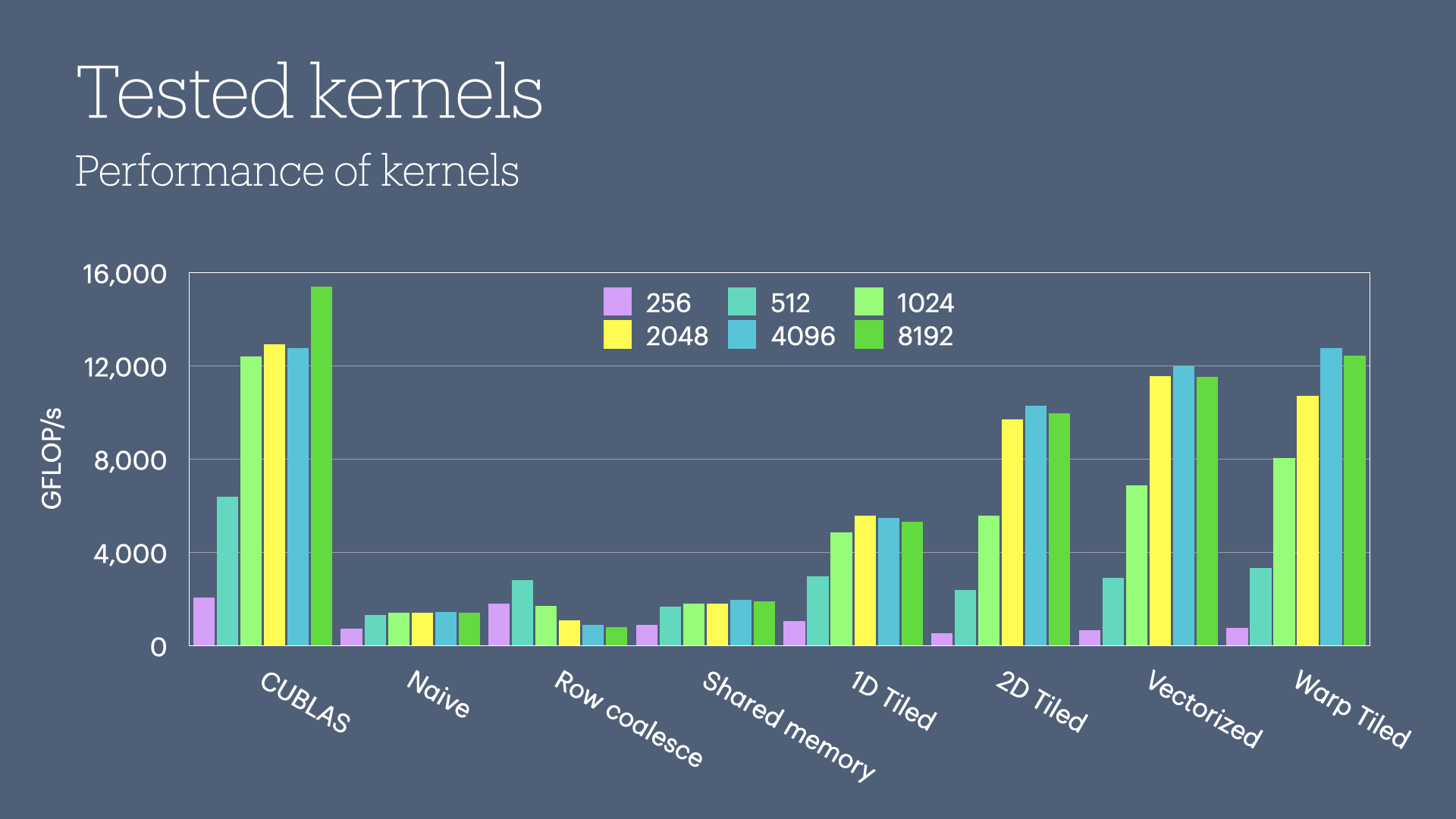

- GPUs make computations fast, especially when you want to perform the same operation on all pieces of data. Multiplying two matrices is a computing problem which fits this bill perfectly. However, all code to compute matrix mutliplication using a GPU is not created equal, and some techniques are better than others. You may have a look at the chart below and head to this repository to learn more.

- My Writing.com Portfolio

Recommendations

I would humbly recommend you to check out the following works that have inspired me

- Ayn Rand (The Fountainhead and Atlas Shrugged)

- Walter Issacson (The Innovators)

- Dale Carnegie (How to Win Friends and Influence People)

- Acharya Prashant

- Everything is Everything Podcast

- What we see and what we don’t see by Frédéric Bastiat